Agent memory that learns

With Hindsight, your agents remember your users and get better at their jobs over time. Open source, MIT licensed.

With Hindsight, your agents remember your users and get better at their jobs over time. Open source, MIT licensed.

The fundamentals

Share what you're building, ask questions, and get help directly from the team.

A few of the teams building with Vectorize

Every other memory system stores facts and retrieves them. That works until your agent encounters the same problem twice and makes the same mistake both times.

LongMemEval scores across four memory systems. Peer-reviewed benchmark, independently reproduced.

Tell Claude Code or Cursor to add Hindsight. It reads the docs, writes the config, and sets up its own memory. One command. No boilerplate.

npx add-skill vectorize-io/hindsight --skill hindsight-docsInstalling hindsight-docs skill...

✓ Connected to Hindsight MCP server

✓ Memory tools registered (remember, recall, reflect)

Ready. Your agent now has memory.

Works with any MCP-capable agent. Your agent gets remember, recall, and reflect tools automatically.

Most agent memory systems were built for chatbots. Those days are gone.

Agents that work together, attend meetings, learn from each other, and take on increasingly complex tasks. They need memory that compounds across every agent and every user. Retrieval-only systems won't get you there.

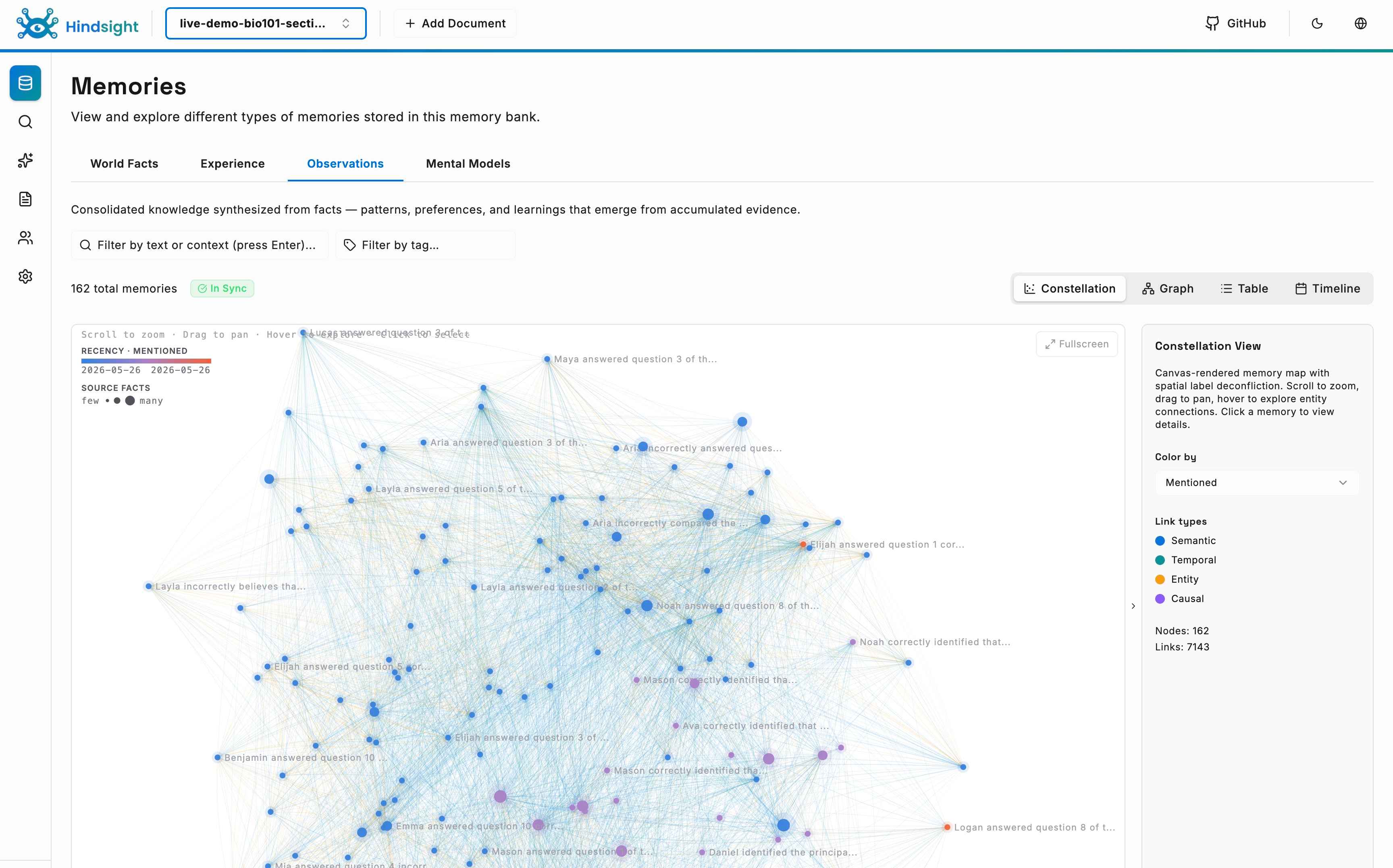

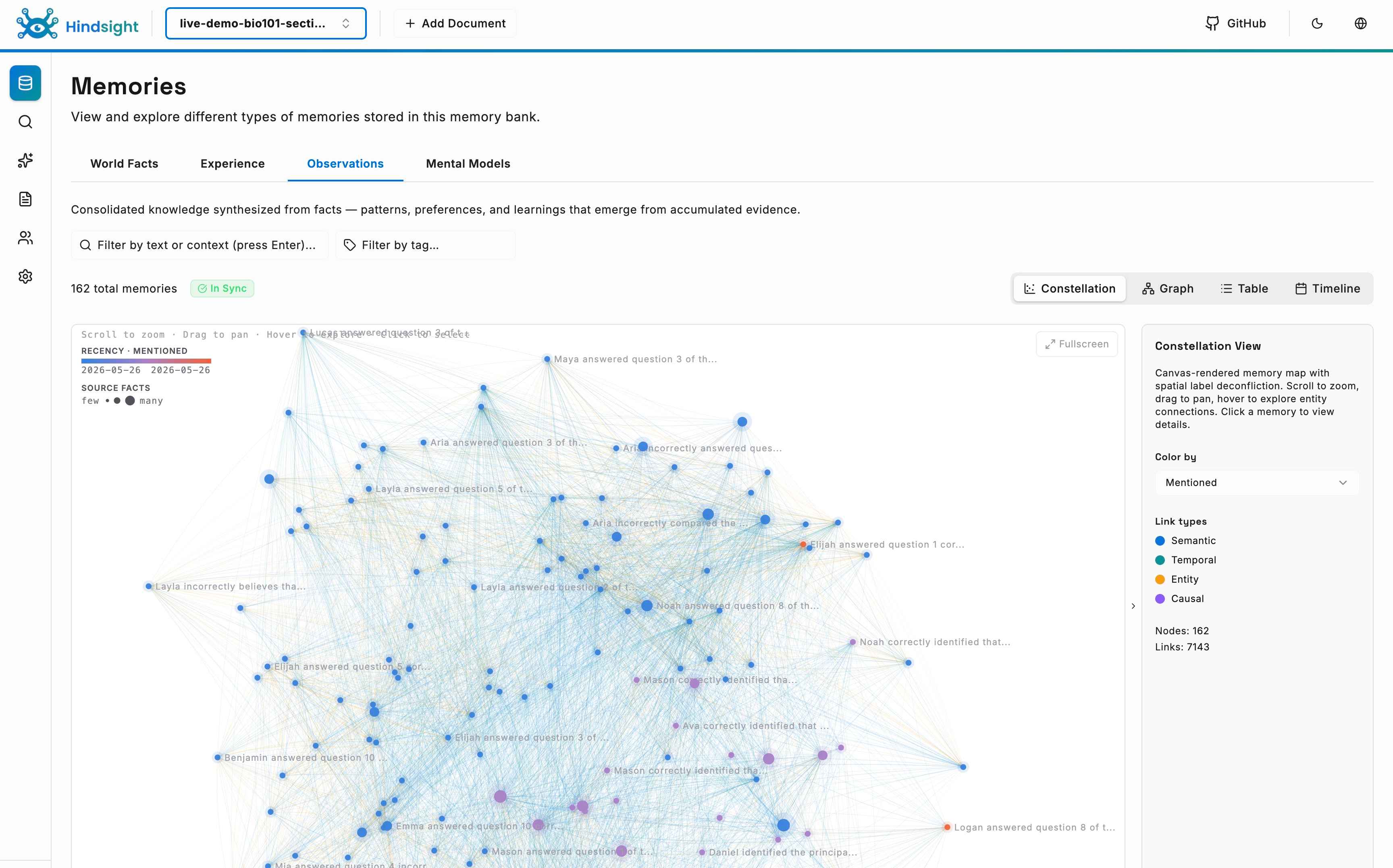

What compound memory looks like

We offer hands-on implementation services, team training, and architecture consulting to get your agents learning faster. Whether you're integrating Hindsight into an existing system or starting from scratch, we'll work with you directly.