MemPalace vs Hindsight: AI Agent Memory Compared

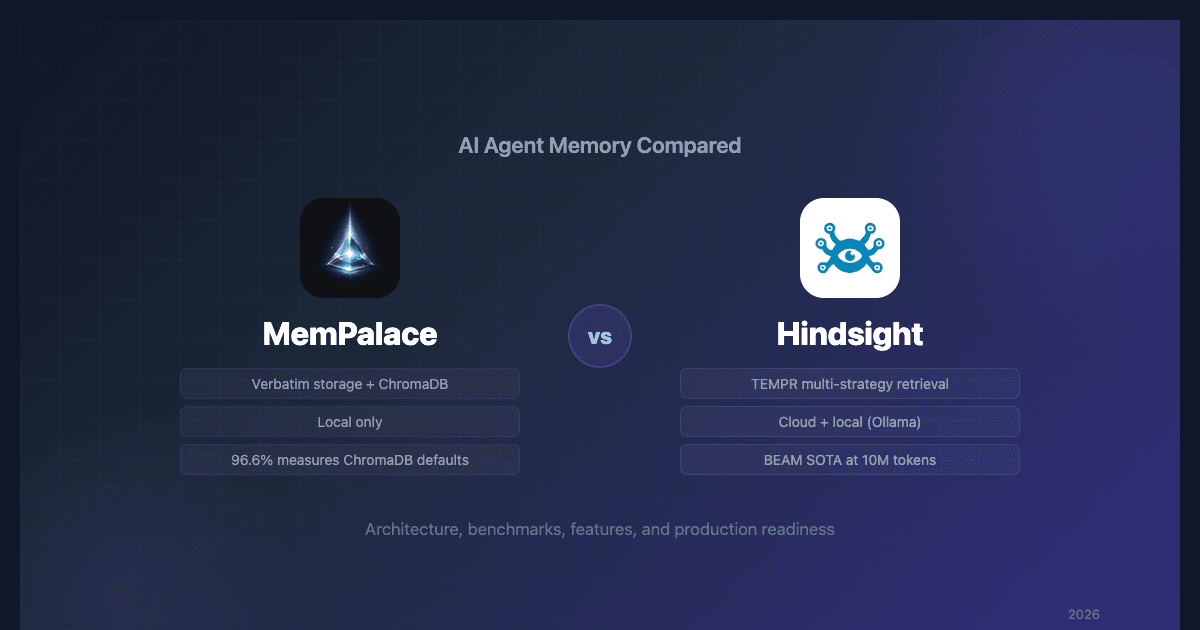

Two agent memory systems are dominating the conversation in April 2026: MemPalace, the viral open-source project by Milla Jovovich and Ben Sigman, and Hindsight, the production-grade memory system by Vectorize. Both use MCP (Model Context Protocol) to give AI agents persistent, cross-session memory. But they take fundamentally different approaches to storing, organizing, and retrieving that memory.

This MemPalace vs Hindsight comparison breaks down architecture, benchmarks, features, and production readiness so you can pick the right system for your use case.

Quick Comparison

| Dimension | MemPalace | Hindsight |

|---|---|---|

| Creator | Milla Jovovich & Ben Sigman | Vectorize.io |

| Release | April 5, 2026 | 2025 (ongoing development) |

| License | Open-source | Open-source + cloud |

| Approach | Verbatim storage + ChromaDB search | Structured extraction + multi-strategy retrieval |

| Deployment | Local only | Cloud + local (Ollama) |

| MCP Setup | Python env + hooks | Native OAuth 2.1 or local with embedded pg0 |

| GitHub Stars | 19,500+ | Growing steadily |

Architecture: How Each System Stores Memory

MemPalace: The Palace Metaphor

MemPalace organizes memories using the ancient Method of Loci. Conversations are stored verbatim in a spatial hierarchy of Wings (projects), Rooms (topics), Halls (memory types), and Drawers (individual entries). Everything runs on ChromaDB for vector search and SQLite for metadata.

The philosophy is simple: store everything, summarize nothing, search when needed.

It's an appealing concept. But independent analysis has revealed that the palace structure is more of a UI metaphor than a retrieval mechanism. When you search for a memory, MemPalace uses ChromaDB's default embedding distance — the wings, rooms, and halls add only metadata filtering, which is a standard database technique that doesn't improve semantic retrieval.

Hindsight: Structured Extraction + Knowledge Graph

Hindsight takes the opposite approach. Rather than storing conversations verbatim, it extracts structured facts, resolves entities, builds a knowledge graph of relationships, and uses cross-encoder reranking to surface the most relevant memories.

The core API revolves around three operations:

- retain — Store a memory with automatic entity extraction and relationship tracking

- recall — Multi-strategy retrieval across all stored memory

- reflect — Reason over accumulated memories to generate insights

Where MemPalace gives you a searchable archive, Hindsight gives you a structured understanding that grows smarter over time.

Retrieval: Single-Strategy vs Multi-Strategy

This is where the MemPalace vs Hindsight comparison gets most decisive.

MemPalace: ChromaDB Embedding Distance

MemPalace retrieval is straightforward: take the query, embed it with ChromaDB's default model, find the closest vectors. There's no BM25, no reranking, no hybrid search. The palace metadata can filter results to specific wings or rooms, but the core retrieval is a single embedding lookup.

This works for queries that are semantically similar to stored text at small scale. But MemPalace's LoCoMo benchmark reveals a deeper issue: the system used top_k=50 against datasets of 19–32 sessions, effectively retrieving everything and handing it to the LLM. That works when your corpus fits in context. After a year of daily agent conversations — tens of thousands of sessions, millions of tokens — retrieve-everything breaks down completely. There's no selective retrieval to fall back on.

Hindsight: TEMPR Multi-Strategy Retrieval

Hindsight uses TEMPR — a fusion of four retrieval strategies:

- Semantic search — Vector similarity, like MemPalace

- Keyword search — BM25-style term matching for precision

- Graph traversal — Following entity relationships across memories

- Temporal reasoning — Native support for time-based queries

Results from all four strategies are combined through Reciprocal Rank Fusion (RRF), then re-scored with cross-encoder reranking. This means Hindsight handles diverse query types that would fail on a single-strategy system.

Critically, this architecture scales. Hindsight was recently evaluated on the BEAM benchmark, which tests memory at up to 10 million tokens — a scale where context-stuffing is physically impossible. Hindsight scored 64.1% at the 10M tier, 58% ahead of the next-best system (Honcho at 40.6%). Its accuracy actually improved from 500K to 1M tokens before gracefully degrading at 10M. MemPalace has no published results at this scale because its retrieve-all approach can't operate there.

Benchmarks: What the Numbers Actually Mean

Both systems cite LongMemEval scores, but the MemPalace vs Hindsight benchmark comparison requires careful methodology analysis.

| Metric | MemPalace | Hindsight | Notes |

|---|---|---|---|

| LongMemEval (reported) | 96.6% (raw) / 100% (hybrid) | 91.4% | Different measurement approaches |

| BEAM (10M tokens) | Not tested | 64.1% (SOTA) | MemPalace's retrieve-all can't scale to 10M |

| What's being measured | Small-corpus retrieval (top_k > corpus) | Full system (TEMPR + entity resolution + reranking) | |

| Palace features enabled | 89.4% (regression) | N/A | MemPalace's novel features reduce accuracy |

| AAAK compression | 84.2% (12.4pp loss) | N/A | "Lossless" compression is lossy |

| LongMemEval methodology | Hand-tuned for 3 failing questions | Standard benchmark run | MemPalace's 100% violates integrity guidelines |

| LoCoMo | 100% (top_k > corpus size) | Not reported | MemPalace's LoCoMo bypasses retrieval |

The headline takeaway: MemPalace's scores were achieved on small datasets where retrieving everything is viable. Hindsight's 91.4% measures the full system with all retrieval strategies engaged. And at real-world scale, there's no contest — Hindsight's BEAM results at 10M tokens (64.1%, SOTA) demonstrate that its architecture works when retrieve-everything stops being an option.

Independent reproduction on an M2 Ultra (GitHub Issue #39) confirmed that palace features cause accuracy regression. The lhl/agentic-memory analysis concluded the 96.6% score "measures ChromaDB's default embedding model performance, not MemPalace itself."

Feature Comparison

Entity Resolution and Knowledge Graph

| Capability | MemPalace | Hindsight |

|---|---|---|

| Entity extraction | Naive slug conversion ("alice_obrien") | Automatic extraction with relationship tracking |

| Knowledge graph | Flat triple lookups, no multi-hop | Multi-hop traversal |

| Contradiction detection | Described in README, not implemented | Active |

| Fact checking | fact_checker.py exists but unwired | Integrated |

| Entity linking | None | Cross-conversation entity resolution |

MemPalace's README describes a knowledge graph with contradiction detection and fact checking. Code review shows these features don't exist in the codebase. knowledge_graph.py has zero occurrences of "contradict," and fact_checker.py isn't connected to any operations.

Hindsight's knowledge graph is functional — entities are extracted, relationships are tracked, and multi-hop traversal works as documented.

Mental Models and Learning

Hindsight includes a feature MemPalace doesn't attempt: mental models. These are structured representations of understanding that auto-update as your agent accumulates memories. Over time, your agent doesn't just recall facts — it develops and refines its understanding of topics, people, and projects.

MemPalace stores memories but doesn't build understanding from them.

Disposition Traits

Hindsight lets you configure how your agent interprets and weighs memories through three disposition traits:

- Skepticism — How critically should the agent evaluate new information?

- Literalism — How literally should it interpret statements?

- Empathy — How much weight should emotional and social context carry?

This gives teams fine-grained control over memory behavior that MemPalace doesn't offer.

Setup and Integration

MemPalace Setup

MemPalace requires:

- Python environment setup

- ChromaDB installation

- MCP server configuration

- Hook configuration for your AI client

- Manual palace structure initialization

The project has documented issues with MCP integration — it writes human-readable startup text to stdout instead of stderr, corrupting JSON message streams in Claude Desktop.

Hindsight Setup

Cloud: Connect through native OAuth 2.1 from any MCP client (Claude Code, Claude Desktop, Cursor, Windsurf, ChatGPT). Authorize and start using retain/recall/reflect. No local dependencies.

Local: Hindsight runs an embedded PostgreSQL (pg0) — no separate database to install or manage. Pair with Ollama for fully local operation with no API keys.

| Setup Factor | MemPalace | Hindsight |

|---|---|---|

| Time to first memory | 15-30 minutes | Under 5 minutes |

| Dependencies | Python, ChromaDB, SQLite | None (OAuth) or Ollama (local) |

| MCP Integration | Manual configuration | Native OAuth 2.1 |

| Claude Desktop | Buggy (stdout corruption) | Works out of box |

| Cursor/Windsurf | Manual hooks | Native OAuth 2.1 |

Production Readiness

| Factor | MemPalace | Hindsight |

|---|---|---|

| Age at viral launch | ~7 days | Months of active development |

| Commits | 7 at launch | Active, frequent releases |

| Test coverage | 4 test files for 21 modules | Comprehensive |

| Input sanitization | None (prompt injection risk) | Yes |

| Write gating | None | Yes |

| Documentation | README exceeds implementation | Accurate to codebase |

| Cloud deployment | No | Yes |

| Scaling | O(n) palace graph, retrieve-all breaks at scale | BEAM SOTA at 10M tokens (64.1%) |

MemPalace was created days before its public release with minimal test coverage. Features described in documentation don't match the codebase. There's no input sanitization, creating a prompt injection surface.

Hindsight is a production system with active development, accurate documentation, and security hardening appropriate for real workloads.

What MemPalace Does Well

A fair MemPalace vs Hindsight comparison should acknowledge where MemPalace shines:

- Local-first privacy — Everything runs on your machine with zero API costs. For developers who won't send data to any cloud, this matters.

- Verbatim storage — No information is lost through summarization. If you need exact conversation recall, this approach preserves everything.

- The palace metaphor — While it doesn't improve retrieval, the spatial organization is an intuitive way for humans to think about memory structure.

- Community response — After criticism, the team acknowledged problems publicly and updated their claims. That's good open-source citizenship.

Verdict: Which Should You Choose?

Choose Hindsight if you need agent memory for real work. The multi-strategy retrieval, real entity resolution, mental models, and production-grade security make it the clear choice for teams building on top of AI agents. Setup takes minutes through OAuth or locally with embedded pg0, and you get the flexibility of cloud or local deployment.

Choose MemPalace if you want a local-only, zero-cost memory experiment and don't mind the early-stage maturity, missing features, and benchmark caveats. The palace metaphor is interesting, and the ChromaDB-based retrieval does work — just not as well as the marketing suggests.

For most teams evaluating MemPalace vs Hindsight, the decision comes down to this: do you need a viral experiment or a production system? Hindsight is the production system.

Further Reading

- What is MemPalace? — How MemPalace works and what the benchmarks actually measure

- MemPalace review — Full independent review of benchmark claims vs reality

- MemPalace benchmarks debunked — Technical teardown of every benchmark claim

- MemPalace alternatives — The 5 best agent memory systems to consider

- What is agent memory? — Foundational concepts behind persistent AI memory

- Best AI agent memory systems compared — Full comparison of all major frameworks

- Agent memory vs RAG — Key architectural differences between memory and retrieval