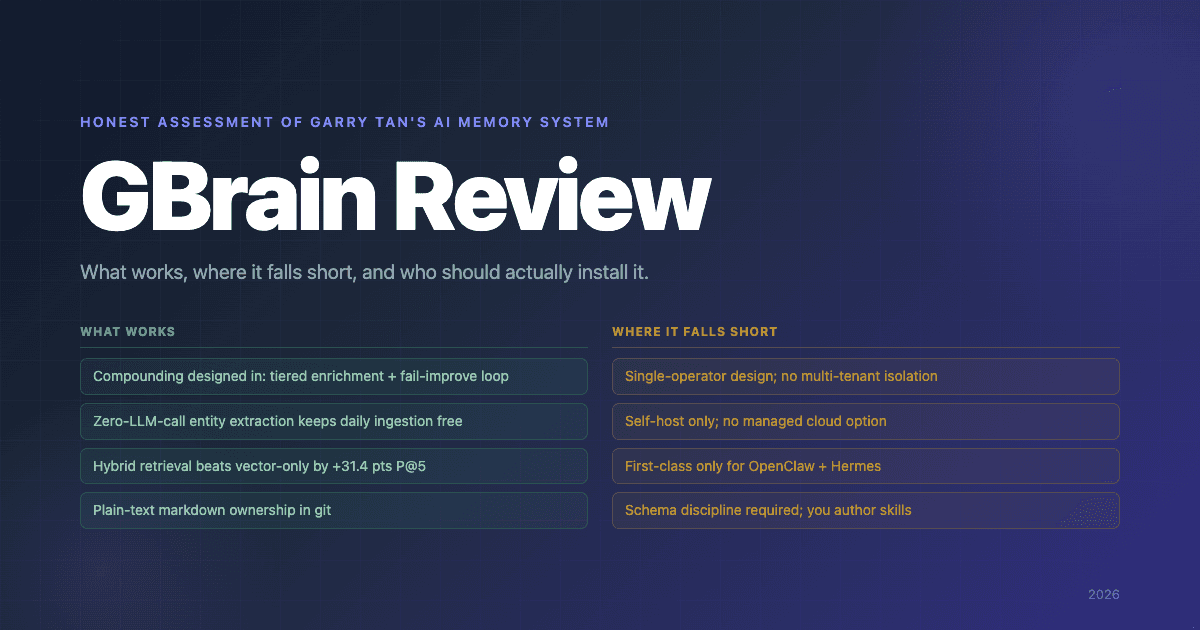

GBrain Review: An Honest Assessment of Garry Tan's Brain

GBrain is the open-source AI agent memory system Y Combinator CEO Garry Tan released on April 5, 2026. It cleared roughly 5,000 GitHub stars in 24 hours, sits at roughly 14,000 stars at the time of writing, and arrives with the rare combination of meaningful technical substance and a celebrity-CEO halo that drives adoption past the usual developer-tools niche.

This GBrain review is not a takedown. The headline numbers are honest, the architecture is thoughtful, and the underlying engineering is more polished than most v0.30 open-source projects. But it's also opinionated software with specific design choices that don't fit every team — and the launch hype has framed it as "agent memory for everyone," which it isn't. The goal here is to identify, specifically, what GBrain does well, what's genuinely clever, where the real gaps are, and who should actually install it.

Verdict in One Paragraph

GBrain is the best open-source markdown-first personal brain available right now if you run OpenClaw or Hermes Agent, value plain-text ownership of your knowledge, and have the discipline to author skill workflows as your schema evolves. It is also young (~v0.30, frequent breaking changes), single-operator by design, self-host only, and structurally a different product from production agent memory platforms — which makes it the right pick for a real but narrow audience and a poor pick for everyone else.

Quick Scorecard

| Dimension | Rating | Notes |

|---|---|---|

| Architecture | 5 / 5 | Three-layer design (markdown to Postgres retrieval to skills) is clean and well-reasoned |

| Retrieval quality | 4 / 5 | Hybrid search + RRF + 4-layer dedup; strong BrainBench numbers; no multi-hop graph or temporal |

| Cost efficiency | 5 / 5 | Zero-LLM-call entity extraction, deterministic classifiers, fail-improve loop |

| Day-one experience | 4 / 5 | 30-min install via PGLite; brain itself starts empty unless you import |

| Long-term value | 5 / 5 | Genuinely compounds if you commit to it |

| Documentation | 4 / 5 | Strong README, candid about install gotchas; some tribal knowledge in skill files |

| Integration breadth | 2 / 5 | First-class only for OpenClaw + Hermes; everything else is build-it-yourself |

| Multi-tenant readiness | 1 / 5 | Not the design center |

| Maturity | 3 / 5 | Frequent breaking changes; young codebase |

| Honesty of marketing | 5 / 5 | Published numbers match what the code does |

What GBrain Does Well

A few things GBrain does meaningfully better than the typical young open-source memory project.

1. Compounding Is Designed In, Not Bolted On

The single most distinctive feature of GBrain is that the system gets smarter on its own through three reinforcing mechanisms:

Tiered enrichment. A person mentioned once gets a stub page (Tier 3). After three mentions across different sources, they get web + social enrichment (Tier 2). After a meeting or eight-plus mentions, full pipeline (Tier 1). The brain learns who matters without being told.

Fail-improve loop. Every LLM fallback for a classification task is logged, and better regex patterns are generated from the failures. Over time, GBrain runs cheaper on the same workload — the opposite of most LLM-driven systems, where costs creep upward.

Backlink-boosted ranking. Pages other brain pages link to get a retrieval lift. This emerges naturally from the typed-edge extraction (next section) and means that as the brain's link density grows, frequently-referenced pages surface more readily.

These three loops are why GBrain is a credible answer for someone willing to commit to running it for months. They're also why you won't see the same value from a one-week trial.

2. Zero-LLM-Call Entity Extraction

Every page write extracts typed entity references — predicates like attended, works_at, invested_in, founded, and advises — using regex and string-matching rules with no LLM calls per write. This is the architectural choice that makes daily ingestion essentially free in tokens. Tan's published Minions benchmark in gbrain-evals shows large ingestion runs completing for $0 in tokens.

The trade-off is that the entity vocabulary is constrained to what the rules cover. For a personal brain at one-operator scale, that's the right call — it stays cheap as your corpus grows. For a multi-tenant memory platform serving thousands of writes per minute with arbitrary entity types, you'd want learned extraction with entity resolution. GBrain made the right choice for the scale it targets.

3. Hybrid Retrieval That Beats Vector-Only by a Lot

Per the architecture documented in docs/architecture/infra-layer.md, GBrain combines:

- HNSW cosine similarity over pgvector embeddings

- Postgres

tsvectorkeyword search withts_rank(title weighted A, compiled-truth section B, timeline C) - Reciprocal Rank Fusion:

score = Σ(1 / (60 + rank)) - 4-layer dedup

- Backlink-boosted ranking

- Optional Claude Haiku query expansion (2 alternative phrasings)

GBrain's published BrainBench numbers report P@5 49.1% and R@5 97.9% on a 240-page Opus-generated corpus, beating the same system with the graph layer disabled by +31.4 points P@5, and beating ripgrep-BM25 + vector-only RAG by a similar margin. The takeaway: the typed-edge graph contributes more retrieval lift than the hybrid search alone. That's a meaningful and rare result — most projects ship hybrid search and then stop.

4. Plain-Text Ownership

The brain repo is Markdown in git. You can git diff what your agent learned overnight, branch your brain to experiment with re-organization, and review writes line-by-line in your text editor. If the database disappears, you rebuild it from the repo. This is a fundamentally different relationship to your memory than a structured-store-only system gives you, and for a meaningful audience (writers, researchers, analysts, founders) it's the deciding feature.

5. Production Infrastructure for a Young Project

A few details that punch above the project's age:

- Minions (canonical as of v0.11.1) — Postgres-native job queue separating deterministic background work from judgment work. Sub-second median runtime vs gateway timeout for the same workload, durable across restarts, zero LLM tokens for the deterministic path.

- Durable agents (

gbrain agent, v0.15) — every Anthropic turn commits tosubagent_messages, every tool call tosubagent_tool_executions. Worker crashes resume from the last committed turn. - Skillify workflow —

gbrain skillify scaffold+gbrain skillify checkturns one-off fixes into permanent skills with tests, resolver entries, and an audit. Version it, repeat it, regression-test it. - Health checks —

gbrain doctor,gbrain skillpack-check --quiet(CI exit codes),gbrain skillpack install --dry-run. Treats the brain as infrastructure, not a hobby project.

This kind of infrastructure depth typically takes 18 months to land in an open-source project. GBrain shipped it from the start because it was already running in Tan's production brain.

6. Honest Marketing

The published BrainBench numbers describe what the code actually does. The README is candid about install gotchas (don't use bun install -g, don't use npm install -g gbrain because of a squatted package). The architecture docs match the code. There's no "100% LongMemEval, world's best memory system" hype — just specific numbers on a clearly described corpus, with the eval code in a sibling repo for anyone to reproduce.

In a category that has had real benchmark-honesty problems (see our MemPalace review), this is a meaningful differentiator on its own.

What's Clever

A few specific design choices worth highlighting because they reflect non-obvious good judgment.

The "compiled truth + timeline" page pattern. Every brain page has a summary section at the top (compiled truth) and an append-only timeline below. Updates compile into the truth section; history is preserved in the timeline. This solves the standard "memory either gets stale or grows unboundedly" problem with a structure operators can read and audit.

Skills as code, not config. A skill is a fat markdown file describing a workflow — when to fire, what to check, how to chain — that the agent reads and executes. This is the opposite of YAML-driven workflow engines. The trade-off is more verbose skill authoring; the upside is that you can read why your agent did something by reading a markdown file, not by debugging a state machine.

"Thin harness, fat skills" ethos. GBrain's runtime is intentionally minimal. The intelligence lives in the 34 skill files that ship with the project (and in the ones you author). This means you can replace or fork skills without touching the core, and means the operator owns the agent's behavior rather than fighting the framework.

Localized to a knowable problem. GBrain is built specifically for OpenClaw and Hermes Agent operators with personal brains. It's not trying to be everyone's agent memory. That focus is why the design choices fit together — and it's also why teams outside that audience should look elsewhere.

Where GBrain Falls Short

These are real limitations, sourced from the README, the architecture docs, and the install path. They're not flaws so much as scope choices, but they're the reasons most teams will end up looking at alternatives.

1. Single-Operator Design

GBrain is built for one operator running a personal brain. Sharing across users requires moving from PGLite to Postgres, managing the brain repo's git operations across machines, and keeping the index in sync with the markdown. The remote Model Context Protocol (MCP) HTTP server (gbrain serve --http) ships OAuth 2.1 with a per-client scope model, so multi-client access is a first-class path. Multi-operator access (different users, different brains, isolation guarantees) is not the design center. If you need multi-tenant memory for an agent product serving end users, this is a structural mismatch.

2. No Managed Cloud

GBrain is self-hosted only. PGLite for local, external Postgres (Tan uses Supabase) for shared mode. There is no "Hindsight Cloud"-equivalent option — no gbrain cloud signup, no managed control plane. If your team can run Postgres and Bun, you'll be fine. If your team wants memory as a service, GBrain isn't it.

3. Integration Breadth Is Narrow

First-class skill packs ship for OpenClaw (openclaw.plugin.json) and Hermes Agent (referenced throughout INSTALL_FOR_AGENTS.md). Everything else integrates through the MCP server you stand up yourself. There are no first-party packages for Claude Code (other than as a generic MCP client), Cursor, Codex, CrewAI, LangGraph, LlamaIndex, AutoGen, n8n, Dify, Pipecat, or LiteLLM. For comparison, Hindsight ships official integrations for 25+ such tools at /sdks/integrations/<name>.

This narrow integration surface is a real day-one obstacle for teams whose stack isn't OpenClaw or Hermes. You can wire it up — that's what the MCP server is for — but you're authoring the wiring.

4. Schema Discipline Required

Every pattern in GBrain is operator-authored. The schema lives in docs/GBRAIN_RECOMMENDED_SCHEMA.md (a sensible default Tan ships), the workflows live in skills/, the recipes live in recipes/. If your facts don't fit a skill, the agent doesn't synthesize new structure for you — you write a new skill. GBrain's own documentation is candid: teams that treat the brain as set-and-forget will compound errors instead of value.

This is a feature for operators who want to author the patterns their brain follows. It is a real cost for operators who'd rather memory just synthesized structure from raw facts on its own (the way Hindsight's observations work). Honest evaluation matters here — picking GBrain because the install is fast, then bouncing off because you don't want to author 10+ skills, is a worse outcome than picking the right tool the first time.

5. No Multi-Hop Graph or Temporal Reasoning at Retrieval

GBrain extracts typed entity edges at write time and uses them for backlink-boosted ranking. But the retriever doesn't traverse the graph multi-hop as a primary strategy. Queries like "who has invested in companies founded by people I met at YC?" require walking the graph, and that's not how GBrain's hybrid retriever fires. Similarly, temporal queries — "what was true last week but isn't now?" — aren't a first-class feature.

For most personal-brain queries this doesn't matter. For workloads where multi-hop or temporal reasoning is structurally required, you want a system whose retriever runs those strategies as primary.

6. Maturity and Install Gotchas

GBrain is at v0.30 with frequent breaking changes (recent v0.28.x and v0.30.x release windows added BrainBench-Real session capture, multimodal ingestion via Voyage, npm-squat detection, and dream-cycle synthesize improvements). The README itself documents two install footguns — never use bun install -g github:garrytan/gbrain (postinstall hook blocked), never use npm install -g gbrain (a squatted package on the npm registry). Both are tracked GitHub issues.

This isn't unusual for a project at this age, and the README is admirably candid about it. But if you need rock-stable behavior across releases, GBrain isn't there yet. Pin a version, expect to track issues, and budget time to patch.

Day-One Experience

The 30-minute install path is real. If you're running OpenClaw or Hermes, the agent-driven install (paste the INSTALL_FOR_AGENTS.md URL into your agent and let it work) is genuinely smooth — clone, install Bun, link, init the PGLite database, configure API keys. Two seconds for the database, ~30 minutes total.

If you're not running OpenClaw or Hermes, the standalone CLI path (git clone + bun install && bun link + gbrain init) is also fine, but you're then on your own to wire the brain into whatever agent or client you actually use. The MCP server makes this possible; it doesn't make it five minutes.

The brain itself starts empty unless you import. gbrain import ~/notes/ will index existing markdown — Obsidian, Logseq, plain text, etc. — which is the recommended way to seed the brain so day-one retrieval is meaningful. From scratch, the first useful retrieval requires the agent to actually run for a while and write pages.

Long-Term Experience (Inferred from Public Reports)

We have not run GBrain in production for months ourselves. The long-term picture in this section is inferred from the README, Tan's published numbers, the gbrain-evals repo, and public discussion since launch.

The signals are positive. Tan's own brain has grown to tens of thousands of pages over a multi-year corpus with 19+ cron jobs running autonomously. The fail-improve loop genuinely reduces LLM dependency over time. The Minions infrastructure handles real production load. Several public adopters report that the brain becomes meaningfully smarter at the 4–8 week mark, when tier-2 enrichment starts firing on people who keep showing up across sources.

The negative signals are the ones you'd expect: occasional breaking changes between minor versions, install issues for new operators (largely fixed by v0.28.5+ self-detection), and the recurring need to author skills as the operator's workflow evolves. None of these are reasons not to install GBrain — they're reasons to know what you're signing up for.

Performance and Benchmarks

GBrain's published benchmark, BrainBench, runs on a 240-page Opus-generated rich-prose corpus (eval code and corpus live in the gbrain-evals sibling repo):

- P@5: 49.1%, R@5: 97.9% for the full system

- +31.4 points P@5 vs. the same system with the graph layer disabled

- Similar margin vs. ripgrep-BM25 + vector-only RAG

LongMemEval integration shipped in the v0.28 release window (gbrain eval longmemeval <dataset.jsonl>); the gbrain-evals repo currently reports 97.60% R@5 on LongMemEval. GBrain has not run BEAM (the long-horizon benchmark Hindsight currently leads at 64.1% on 10M tokens), and the retrieve-everything-style benchmarks favored by some competitors aren't tested.

The honest read on GBrain's benchmarks: the published numbers are internally consistent, the methodology is documented, and the eval code is reproducible. They are not directly comparable to academic benchmark scores from other systems because the corpus is different.

Pricing

GBrain itself is MIT-licensed and free. Real costs are:

- OpenAI API — required for vector search (text embeddings). Roughly $0.10 per million tokens of ingestion at current pricing.

- Anthropic API — optional. Powers query expansion (~2 alternative phrasings per search). Skipping it works; Anthropic-augmented retrieval is meaningfully better.

- Postgres — free with PGLite for local; for shared mode, your existing Postgres or a managed instance (Tan uses Supabase). Supabase free tier handles a small brain comfortably.

In practice, an active personal brain costs single-digit dollars per month in LLM calls. The architectural choices that make this true (deterministic entity extraction, fail-improve loop) are why GBrain compares so favorably on cost to LLM-heavy memory products.

Who Should Install GBrain

You should install GBrain if you:

- Run OpenClaw or Hermes Agent (the supported integration targets)

- Want plain-text ownership of your memory in a git repo

- Are willing to author skill workflows as your schema evolves

- Have a multi-month time horizon — GBrain rewards investment and underdelivers on a one-week trial

- Are operating at single-operator scale (yourself, not a multi-tenant product)

- Are comfortable running Postgres and tracking GitHub issues for a young project

You should look at GBrain alternatives if you:

- Run any agent stack other than OpenClaw or Hermes (Claude Code, Cursor, CrewAI, LangGraph, n8n, etc.) and want a first-class integration

- Need memory as a service rather than self-hosted infrastructure

- Want a system that synthesizes structure from raw facts automatically (observations, mental models) rather than running operator-authored skills

- Need multi-tenant isolation, enterprise compliance posture, or a managed cloud

- Need multi-hop graph traversal or temporal reasoning at retrieval as primary strategies

For the head-to-head against the closest production alternative, see GBrain vs Hindsight: AI Agent Memory Compared.

Final Verdict

GBrain is a genuinely impressive piece of engineering for the audience it's designed for. The compounding mechanisms (tiered enrichment, fail-improve loop, backlink ranking) are non-obvious good design. The infrastructure (Minions, durable agents, skillify, health checks) is more polished than most young open-source projects. The marketing matches what the code does. And the fundamental decision to make markdown the source of truth — diffable, branchable, operator-readable — is the right call for the operator-owns-their-brain audience.

It is also opinionated software with a narrow integration surface, single-operator design, no managed cloud, and a young codebase. It is not the right pick for teams who want agent memory that works across whatever stack they happen to run.

Pick GBrain if the description above fits you. If it doesn't, Hindsight and the alternatives covered in GBrain alternatives are likely the better path. Both can be true: GBrain is excellent, and it isn't for everyone.

Frequently Asked Questions

Is GBrain worth installing? If you fit the audience above (OpenClaw/Hermes operator who values markdown source of truth and is willing to author skills), yes — install it. If you don't fit that audience, the alternatives will likely fit better.

Is GBrain better than Mem0 / Zep / Hindsight? Wrong question. GBrain is a different product class from production memory platforms — it optimizes for a single-operator markdown brain, while Mem0 / Zep / Hindsight optimize for memory-as-a-service. See GBrain vs Hindsight for a head-to-head against the closest production alternative.

Can I run GBrain in production? It depends what production means. As Tan's personal brain serving his daily agent workflow: yes, demonstrably. As multi-tenant memory infrastructure for an agent product serving thousands of end users: not without significant custom work, because that isn't its design center.

How much does GBrain cost to run? Single-digit dollars per month for a personal brain at active usage. GBrain itself is free; you pay for OpenAI embeddings (required), optional Anthropic for query expansion, and your Postgres (PGLite free, Supabase free tier sufficient for small brains).

Is the celebrity / YC factor inflating GBrain's reputation? A bit, yes. The brand halo is doing some of the work that drove the rapid star count. But the underlying architecture and engineering also stand on their own — the BrainBench numbers, the Minions benchmarks, and the fail-improve loop are real and well-designed. Strip the YC-CEO branding and GBrain would still be one of the better personal-brain projects on offer; with it, GBrain is also benefiting from outsized visibility.

Further Reading

- What is GBrain? Garry Tan's AI Agent Memory System Explained — Foundational explainer

- GBrain vs Hindsight: AI Agent Memory Compared — Head-to-head with the closest production alternative

- GBrain alternatives: 5 best agent memory systems — When GBrain isn't the right fit

- GBrain vs Hindsight vs Mem0 vs Zep — Four-way comparison across the leading memory systems

- What is agent memory? — Foundational concepts

- Best AI agent memory systems compared — Full landscape

- OpenClaw vs Hermes Agent: Memory Compared — The two agents GBrain ships skill packs for