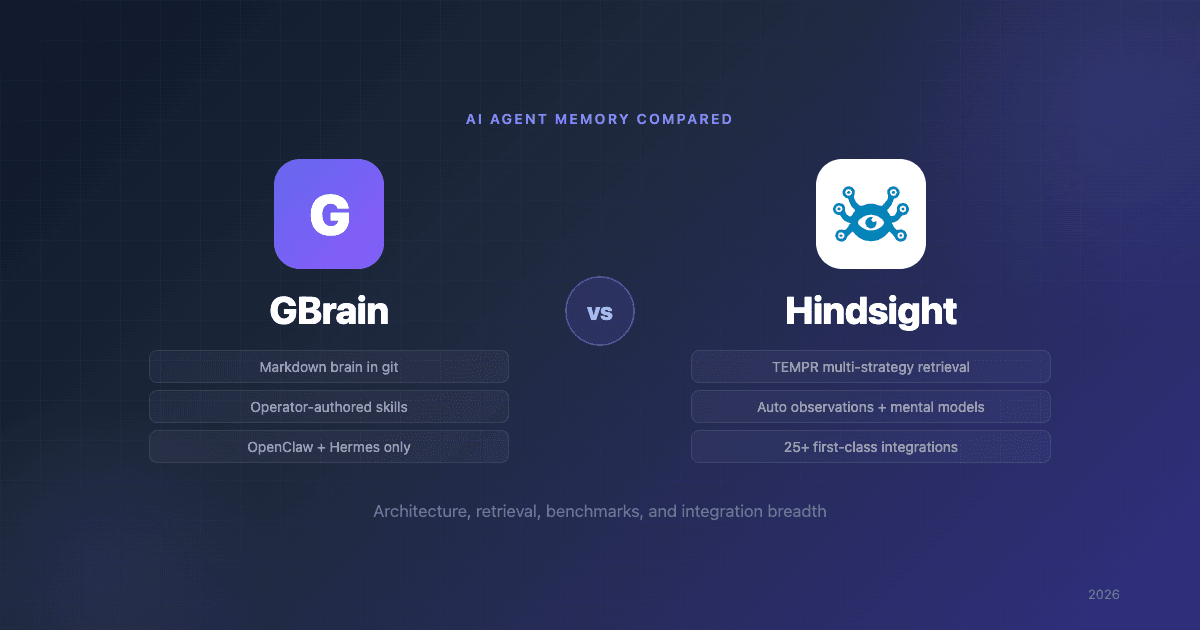

GBrain vs Hindsight: AI Agent Memory Compared

GBrain vs Hindsight is the comparison evaluators face most often when shopping AI agent memory: the markdown-first personal brain Y Combinator CEO Garry Tan open-sourced in April, against the production memory platform Vectorize ships under the same MIT license. Both call themselves memory for AI agents. Both use hybrid Postgres retrieval. Both expose Model Context Protocol (MCP) servers. They solve different problems for different operators.

GBrain is the personal knowledge system Garry Tan had been quietly running behind his own AI agent setup. The repo went public on April 5, 2026 and sits at roughly 14,000 stars at the time of writing. The pitch was immediately compelling: a git-versioned brain repo, hybrid Postgres retrieval, an MCP server with 30+ contract-first operations, and 34 skills designed to be plugged straight into OpenClaw and Hermes Agent.

Hindsight, built by Vectorize and released in December 2025, has accumulated roughly 12,800 GitHub stars and powers production agent memory deployments via a managed cloud platform, native MCP, and a four-strategy retrieval engine that holds the current SOTA on the BEAM 10M-token benchmark.

This GBrain vs Hindsight comparison breaks down architecture, retrieval, benchmarks, integrations, and use case fit so you can pick the right one.

Quick Comparison

| Dimension | GBrain | Hindsight |

|---|---|---|

| Creator | Garry Tan (YC CEO) | Vectorize |

| Release | April 5, 2026 | December 2025 |

| License | MIT | MIT (managed cloud also available) |

| Source of truth | Markdown files in a git repo | Structured memory store |

| Retrieval | Hybrid (HNSW + tsvector) with RRF | Multi-strategy (semantic + BM25 + graph + temporal) with cross-encoder reranking |

| Auto-learning from new memories | Operator-authored skills run on cron | Observations auto-consolidate facts; mental models auto-refresh |

| Storage backend | Postgres + pgvector (or PGLite WASM for local) | Postgres 14+, pluggable vector extension (pgvector, pgvectorscale, vchord, ScaNN) and LLM provider |

| Self-host | Yes; git clone + bun install && bun link; PGLite means no DB server | Yes; Docker, Helm, or bare-metal pip install on Linux / macOS / Windows |

| Zero-config local mode | PGLite (WASM Postgres) | Embedded pg0 Postgres bundled with the API |

| Managed cloud | No | Yes |

| MCP | Yes (native) | Yes; OAuth 2.1 on the managed cloud |

| Built for | OpenClaw + Hermes Agent (single operator) | Any agent framework, multi-tenant by design |

| Official integrations | OpenClaw skill pack; Hermes via MCP | 25+ integrations spanning Claude Code, Cursor, Codex, Hermes, CrewAI, LangGraph, LlamaIndex, AutoGen, Agno, n8n, Dify, Pipecat, LiteLLM, and more |

| GitHub stars | ~14,000 | ~12,800 |

What Each System Is

GBrain: A Brain Repo, Generalized

GBrain is the system Garry Tan built for himself, then released. His own brain holds tens of thousands of pages, thousands of people, hundreds of companies, and a fleet of autonomous cron jobs that ingest meeting transcripts, emails, and tweets while he sleeps. Each markdown page follows a "compiled truth + timeline" pattern — a summary at the top, dated entries below — so that updates are append-only and history is preserved.

The released project generalizes that pattern. The architecture is three layers:

- Brain Repo — plain Markdown files, version-controlled in git. The system of record. Human-readable, diff-able, branchable.

- GBrain Retrieval — Postgres with pgvector for HNSW vector search and tsvector for keyword search, fused with Reciprocal Rank Fusion. PGLite (WASM Postgres) for zero-config local mode.

- AI Agent Skills — a skill pack that teaches OpenClaw or Hermes Agent how to read, write, and reason against the brain.

The whole thing is contract-first: a single set of operations defines the system, and those operations are exposed through both a CLI and an MCP server. There are 30+ operations covering ingest, entity detection, retrieval, and graph manipulation, plus 34 markdown skill files that teach the agent when and how to chain those operations into workflows like meeting ingestion, daily task prep, or book-mirror analysis.

Hindsight: A Memory Platform

Hindsight takes a platform stance. Rather than asking the operator to manage markdown files and a Postgres instance, it offers a managed memory layer with three core operations — retain, recall, reflect — and lets the agent treat memory as a service.

The retain → recall → reflect loop is the Hindsight contract:

- retain — store a memory; Hindsight extracts entities, resolves them against the existing knowledge graph, and indexes the memory across multiple retrieval strategies.

- recall — query the store; Hindsight runs four parallel retrieval strategies, fuses them with RRF, and reranks with a cross-encoder.

- reflect — reason over accumulated memory to produce summaries, opinions, and procedural skills.

The same API works against the managed cloud or against a self-hosted deployment. Both surface as MCP. The managed cloud adds OAuth 2.1 for one-click MCP onboarding; self-hosted deployments wire MCP through whatever auth your stack already uses.

GBrain vs Hindsight Architecture: Markdown-First vs Structured-First

GBrain and Hindsight differ most clearly in what they treat as the source of truth.

GBrain treats human-readable markdown as primary. The retrieval index exists to serve queries against the markdown — but the markdown is what you keep, edit, and version. If the database disappears, you can rebuild it from the repo. The trade-off: the agent has to write good markdown, and you have to develop the discipline to curate the schema. GBrain's own documentation is candid about this — teams that treat the brain as set-and-forget will compound errors instead of value.

Hindsight treats the structured store as primary. Memories enter the system as facts — extracted entities, resolved relationships, confidence scores. Markdown isn't the system of record; the structured graph is. The trade-off: you can't git diff your memory, and human review of memories happens through the Hindsight UI rather than a text editor.

This isn't a quality difference; it's a philosophical one. If you are an operator who wants to read, edit, and own the source of truth in plain text, GBrain's approach is unambiguously the better fit. If you are deploying memory to agents that will write thousands of memories per day across multiple tenants, structured-first is operationally cheaper.

Entity Linking

Both systems extract entities and create typed links between them.

GBrain extracts links during page writes using regex and string-matching rules — typed predicates like attended, works_at, invested_in, founded, and advises get materialized into the graph with zero LLM calls per write. This is fast and cheap, and it means the brain can index aggressively even on large daily ingestion runs. The cost: the entity vocabulary is constrained to what the rules cover.

Hindsight extracts entities and relationships using a learned extractor as part of retain. The extractor is broader — it identifies entities and relations the agent has never named explicitly, and it runs entity resolution against the existing graph so that "Garry," "Garry Tan," and "@garrytan" collapse to one node. This is more accurate at the boundary, but it costs an LLM call per significant write.

For a personal brain at one-operator scale, GBrain's deterministic extraction is the right call — it stays cheap as your corpus grows. For a multi-tenant memory platform serving thousands of writes per minute, you generally want learned extraction with entity resolution, because the cost-per-write can be amortized and the ambiguity at the boundary is where errors compound.

Automatic Learning vs Operator-Curated Workflow

Both systems automate ingestion, but they automate fundamentally different things. This is the most consequential architectural difference once you start running either at scale.

Hindsight learns automatically as memories arrive. Two layers above raw facts do this work:

- Observations — after every

retain(), a background consolidation engine groups related new facts against existing observations, refines beliefs (rather than overwriting them), and tracks the evolution. Each observation carries a proof count, exact-quote evidence, and a computed freshness trend (stable / strengthening / weakening / new / stale). When a new fact contradicts an existing observation, the engine reconciles the contradiction by capturing the journey — "User was previously a React enthusiast but has since switched to Vue" — instead of silently overwriting the prior belief. No operator wrote a "track preference change" rule; the system synthesized it from the raw facts. - Mental models — user-defined topics whose content is auto-maintained. You name a question you care about ("what do we know about Alice?"), Hindsight runs

reflect()to populate it, and the model auto-refreshes against new facts via tag-filtered refresh. The operator picks the topics; the system populates and updates them.

reflect() then queries hierarchically — mental models first (highest-priority pre-computed answers), then observations (consolidated beliefs), then raw facts as ground truth. The agent gets condensed knowledge, not just a pile of raw memories.

GBrain automates a different layer. GBrain's automation runs operator-written skills on a schedule. The signal-detector skill fires on every message and captures entity mentions. The enrich skill creates and updates person/company pages with compiled truth and timelines. The maintain skill handles stale pages, orphans, dead links, and citation audits. Recent dream-cycle synthesize improvements run overnight to turn conversation transcripts into reflections and patterns. All cron jobs are autonomous in the sense that the agent runs them while you sleep — but every one of those skills is a markdown workflow file the operator (or Tan, in the released defaults) wrote and the operator owns.

This produces a real trade-off:

- GBrain's automation is executive. It runs the patterns you've declared. The schema lives in

docs/GBRAIN_RECOMMENDED_SCHEMA.md, the workflows live inskills/, the recipes live inrecipes/. Compounding works because the operator has invested in the schema; the system itself doesn't infer it. If your facts don't fit a skill, the agent doesn't synthesize new structure — you write a new skill. - Hindsight's automation is generative. It synthesizes new structure (observations) from raw facts without an operator-defined pattern. As the corpus grows, beliefs emerge, evolve, and get reconciled with no schema work. The trade-off is that the structure is in the bank, not in your text editor — you don't

git diffyour observations, you query them.

For a single operator who knows exactly how they want their brain organized and is happy to author skills as the schema evolves, GBrain's executive automation is the right tool. For an agent serving end users where the schema cannot be hand-curated per tenant, Hindsight's generative automation is structurally cheaper to operate.

GBrain vs Hindsight Retrieval: Where the Architectures Diverge Most

This is the single most important section of any GBrain vs Hindsight comparison.

GBrain: Hybrid Search with Multi-Query Expansion

A query into GBrain follows this path (per docs/architecture/infra-layer.md):

- Optional query expansion — if

ANTHROPIC_API_KEYis set, Claude Haiku generates 2 alternative phrasings to broaden coverage. Without Anthropic, expansion is skipped and search still works. - Vector search — HNSW cosine similarity over pgvector embeddings on

content_chunks. - Keyword search — Postgres

tsvectorwithts_rank, run in parallel. Title is weighted A, compiled-truth section B, timeline C. - RRF merge — results combined with

score = Σ(1 / (60 + rank)). - 4-layer dedup — best 3 chunks per page (source dedup), Jaccard > 0.85 (text dedup), and two more passes.

- Backlink-boosted ranking — pages that other brain pages link to get a ranking lift.

GBrain's published BrainBench numbers (sibling gbrain-evals repo) report P@5 49.1% and R@5 97.9% on a 240-page Opus-generated rich-prose corpus, beating the same system with the graph layer disabled by +31.4 points P@5, and beating ripgrep-BM25 + vector-only RAG by a similar margin. The headline takeaway: GBrain's structured graph (the typed-edge layer) is doing more retrieval work than the hybrid search alone.

Hindsight: TEMPR (Four Parallel Strategies)

Hindsight's retriever, TEMPR, runs four strategies on every query:

- Semantic search — vector similarity, like GBrain.

- BM25 keyword search — also like GBrain.

- Graph traversal — follow typed entity edges multi-hop. GBrain has the edges but doesn't traverse them as a primary retrieval strategy.

- Temporal reasoning — native handling of time-based queries: "what did I learn about X last week?", "what was true in January but isn't now?", "what changed between April and May?"

Results from all four strategies fuse through RRF, then re-score through a cross-encoder reranker.

The two strategies Hindsight has that GBrain doesn't are graph traversal and temporal reasoning. For a brain that holds people, companies, and relationships, multi-hop graph traversal answers questions vector search simply cannot — "who has invested in companies founded by people I met at YC?" requires walking the graph, not nearest-neighbor lookup. Temporal reasoning matters when memory needs to honor when something was true, not just whether it was ever true.

This isn't theoretical. Hindsight currently holds SOTA on the BEAM 10M-token benchmark at 64.1%, with the next-best system at 40.6%. BEAM stresses the kind of long-horizon, time-aware queries where graph traversal and temporal reasoning matter most. GBrain has not published BEAM results.

Benchmarks

Direct head-to-head benchmarks between GBrain and Hindsight don't exist yet. What's available:

| Benchmark | GBrain | Hindsight |

|---|---|---|

| BEAM 10M tokens | Not published | 64.1% (SOTA) |

| LongMemEval | Integrated as gbrain eval longmemeval; 97.60% R@5 reported in gbrain-evals | 91.4% (full system) |

| BrainBench (240-page Opus corpus) | P@5 49.1%, R@5 97.9%; +31.4 pts P@5 from graph layer | Not run against this corpus |

| Multi-tenant production load | Single-operator design | Built for multi-tenant load |

The honest read: GBrain's reported BrainBench numbers come from an in-house evaluation on a 240-page corpus that is useful as a sanity check on the retrieval design. They are not directly comparable to Hindsight's published academic-benchmark results. GBrain's LongMemEval integration is recent, so community-reproducible scores are still emerging.

Day-One Value and Compounding

A real difference that doesn't show up in benchmarks: how each system performs early on.

GBrain installs in about 30 minutes — git clone, bun install && bun link, gbrain init. PGLite means there's no Postgres server to provision; the database is ready in two seconds. If you gbrain import ~/notes/ with an existing markdown corpus, day-one retrieval is meaningful. If you start empty, day-one retrieval is empty until the cron jobs (signal-detector, meeting ingestion, idea ingest) start populating the brain. Either way, the graph richness compounds: Tan's own brain — tens of thousands of pages, thousands of people, hundreds of companies — was built over a decade-plus of notes ingested into the system, and the typed entity edges grow more useful as the page count grows.

Hindsight is designed to be useful immediately. Day-one agents retain and recall against a structured store with no manual schema curation; entity resolution and reranking work from the first write. Long-term value still compounds — more memories produce richer graphs and more reflection material — but the operator doesn't have to wait for ingestion cron jobs to populate a corpus.

If you want a markdown brain that is uniquely yours and you have notes to seed it with (or are happy to grow it from scratch), GBrain rewards that investment. If you need memory that an agent (or a fleet of agents) can rely on starting next week with no curation overhead, Hindsight is purpose-built for that.

Use Case Fit

| If you want… | Pick |

|---|---|

| A markdown-first knowledge base you read, edit, and version-control yourself | GBrain |

| A personal brain you author skills for and grow over years | GBrain |

| Memory you want to own as plain text in a git repo | GBrain |

Persistent memory for Hermes Agent with zero skill authoring (hermes memory setup) | Hindsight |

| Memory infrastructure for an agent product serving end users | Hindsight |

| Drop-in integration with Claude Code, Cursor, Codex, CrewAI, LangGraph, LlamaIndex, n8n, Dify, Pipecat, etc. | Hindsight |

| Multi-tenant memory with isolation guarantees | Hindsight |

| Time-aware queries and multi-hop graph traversal at retrieval | Hindsight |

| Beliefs that auto-reconcile contradictions across thousands of memories | Hindsight |

| SOTA-grade long-horizon retention without authoring schema or skills yourself | Hindsight |

| A managed memory layer with one-click OAuth 2.1 MCP onboarding | Hindsight (cloud) |

There's overlap — both systems are used by individual operators running personal agents — but the centers of gravity are different. GBrain centers on a single operator who wants to author the patterns their brain follows. Hindsight centers on agents (personal or product-facing) that need a memory layer to do its job without the operator authoring schema or skills.

Ecosystem and Integrations

This is one of the largest practical differences between the two and worth a section of its own.

GBrain ships as a brain for two specific agents. The repo's openclaw.plugin.json is a first-class skill pack for OpenClaw. The README and INSTALL_FOR_AGENTS.md reference both OpenClaw and Hermes Agent as the supported install targets. Beyond those two, the integration surface is what an operator builds via the MCP server (gbrain serve --http) and the 30+ MCP operations.

Hindsight ships official integrations across every major category that touches AI agents. As of writing, the hindsight-integrations directory and docs-integrations/ cover:

- Coding tools and IDEs — Claude Code, Cursor, Codex CLI, OpenCode, OpenClaw, NemoClaw, Context Forge

- Personal agents — Hermes Agent (

hermes memory setup → hindsightis a native provider, and Hermes users are a major Hindsight audience), ChatGPT, Claude Desktop, Perplexity - Agent frameworks — AG2, AgentCore, Agno, Autogen, CrewAI, LangGraph, LlamaIndex, OpenAI Agents SDK, Pydantic AI, SmolAgents, Strands, Vercel AI SDK

- Orchestration and no-code — n8n, Dify, Pipecat (voice), Paperclip

- LLM gateways — LiteLLM (100+ providers transparently)

The Hermes integration is worth dwelling on because it overlaps directly with one of GBrain's two supported agents. A large number of Hindsight customers are personal-agent operators running Hermes — they wire memory in with hermes memory setup, pick hindsight from the provider list, paste an API key, and the retain/recall/reflect loop runs automatically. There is no skill to author. That is a different product from GBrain even when both are used as the memory layer for the same agent: with GBrain, you author the patterns; with Hindsight, the platform synthesizes structure from raw facts.

If your stack today includes a coding tool, an agent framework, an orchestrator, an LLM gateway, or a personal agent, there's a strong chance Hindsight already has a documented integration path for it. GBrain is purpose-built for two agents and asks you to integrate everything else yourself.

Production Considerations

A few practical notes on running each in production.

GBrain is a single-operator system by design. PGLite makes local-only setups trivial, and the standalone CLI runs on macOS or Linux out of the box. The moment you want shared brain access — say, two collaborators or one agent across two machines — you switch from PGLite to Postgres, manage the brain repo's git operations across machines, and keep the index in sync with the markdown. The remote-MCP HTTP server (gbrain serve --http) ships OAuth 2.1 with a per-client scope model and an embedded admin dashboard, so multi-client access is a first-class path; multi-operator access is not the design center. The system is also young (v0.30 at the time of writing, with frequent breaking changes), so expect to read code and track GitHub issues if you push it hard.

Hindsight can be operated as a service or self-hosted. The managed cloud handles isolation, scaling, and durability for teams that want memory as a service. Self-hosted deployments are first-class and ship with several paths: a single-container Docker image (with full and slim variants), a Helm chart for Kubernetes, and bare-metal pip install on Linux, macOS, or Windows. Postgres is pluggable — embedded pg0 for development, or external Postgres 14+ via Supabase, Neon, Azure Database, AlloyDB, RDS, Cloud SQL, or self-hosted, with your choice of vector extension (pgvector, pgvectorscale, vchord, or ScaNN). There is no markdown to keep in sync either way — the structured store is the source of truth. The trade-off is that you don't own the source of truth as plain text; you own it through the API.

If your team has SREs, Hindsight is straightforward to operate. If your team is a single person who wants to read their brain in vim, GBrain is the right fit.

Frequently Asked Questions

Is GBrain a Hindsight alternative? Sort of. They overlap on the surface — both are agent memory systems with hybrid search and MCP. But GBrain optimizes for a single operator with a personal brain, and Hindsight optimizes for agents serving users. If you're evaluating GBrain because you want managed memory infrastructure, look at Hindsight directly. If you're evaluating Hindsight because you want a markdown-first brain you own end-to-end, look at GBrain.

Can I use them together? Yes. GBrain can serve as the brain repo for a single operator, and Hindsight can serve as the agent's external memory for that operator's products. The contract-first design of both systems means there's no conflict — they live at different layers. Several teams have started prototyping this combination.

Which one is better for OpenClaw or Hermes Agent? Both work with both agents, and which one to pick depends on what you want from the memory layer rather than which agent you run. Hermes ships hermes memory setup with hindsight as a native provider, and a large fraction of Hindsight's user base runs personal agents on Hermes — so "Hermes user" is not a reason to pick GBrain. Pick GBrain if you want to author skills and own a markdown brain. Pick Hindsight if you want memory to be a service the agent calls — retain, recall, reflect — with observations and mental models maintained automatically.

What about price? Both are MIT-licensed and free to self-host; you pay for your own Postgres instance and your own LLM calls. Hindsight additionally offers a paid managed cloud for teams that don't want to operate the stack themselves. For pricing on the cloud tier, see Vectorize pricing.

How does GBrain compare to Mem0, Zep, or Letta? Closer to a personal-brain category than to those production-memory products. For agent memory shopping that includes Mem0/Zep/Letta, see Hindsight vs Mem0 and the comparison of all 8 major frameworks.

Further Reading

- What is GBrain? Garry Tan's AI Agent Memory System Explained — foundational explainer for GBrain

- GBrain alternatives: 5 best agent memory systems — when GBrain isn't the right fit

- GBrain Review: honest assessment of Garry Tan's AI memory system — strengths, weaknesses, and verdict

- GBrain vs Hindsight vs Mem0 vs Zep: Agent Memory Compared — four-way comparison across the leading memory systems

- What is agent memory — foundational concepts

- Best AI agent memory systems compared — full comparison of agent memory frameworks

- Hindsight vs Mem0 — the closest sibling comparison

- OpenClaw vs Hermes Agent: Memory Compared — the two agents GBrain ships skill packs for

- Agent memory vs RAG — how agent memory differs from retrieval-augmented generation

- How do AI agents learn? — the 4 mechanisms behind agent improvement, and where skills vs memory fit

GBrain is a remarkable artifact — a working personal memory system from one of the sharpest operators in tech, released MIT and ready to fork. If you want to author the patterns your brain follows and own the source of truth as plain markdown, install it. If you want memory to be a service your agent calls — whether that agent is Hermes, Claude Code, Cursor, a CrewAI workflow, an n8n orchestration, or an end-user-facing product — evaluate Hindsight. The two systems automate different layers and reward different kinds of operators.