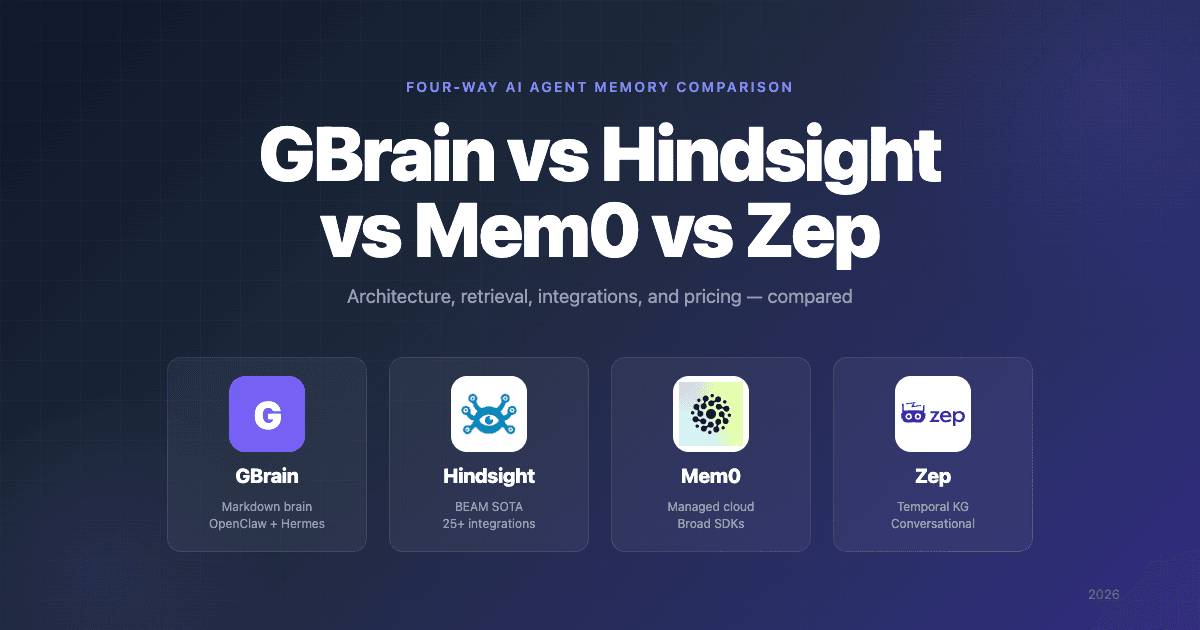

GBrain vs Hindsight vs Mem0 vs Zep: Memory Compared

Four AI agent memory systems dominate the conversation right now: GBrain, the markdown-first personal brain Y Combinator CEO Garry Tan open-sourced in April; Hindsight by Vectorize, the production memory platform with BEAM SOTA at 10M tokens; Mem0, the most established commercial memory-as-a-service; and Zep, the temporal-knowledge-graph option for conversational AI.

Each is excellent for the audience it's designed for. None is the right answer for everyone. This GBrain vs Hindsight vs Mem0 vs Zep comparison covers what each system actually is, how the architectures differ, where the retrieval design wins, what integrations ship out of the box, what they cost, and which one fits which kind of team.

At-a-Glance Verdict

- Pick GBrain if you run OpenClaw or Hermes Agent, want to author skill workflows yourself, and value plain-text markdown ownership of your brain.

- Pick Hindsight if you want production memory infrastructure that synthesizes structure from raw facts automatically (observations + mental models), with 25+ first-class integrations and a managed cloud option.

- Pick Mem0 if you want a mature managed service with broad SDK language coverage and don't need graph or temporal reasoning.

- Pick Zep if your application is conversational-first and temporal-graph reasoning is a hard requirement.

The detailed comparison is below.

What Each System Is

GBrain

GBrain is an open-source markdown-first knowledge system released by Garry Tan on April 5, 2026. ~14,000 GitHub stars, MIT license. Three layers: a Brain Repo (Markdown files in git), GBrain Retrieval (Postgres + pgvector with hybrid search), and an AI Agent Skills layer (34 markdown workflow files plus 30+ contract-first operations). Built specifically for OpenClaw and Hermes Agent operators running personal brains. Self-hosted only — no managed cloud. PGLite (WASM Postgres) for zero-config local mode.

For a deeper explainer, see What Is GBrain?.

Hindsight

Hindsight is a production agent memory platform built by Vectorize and released in December 2025. ~12,800 GitHub stars, MIT license. Three core operations: retain, recall, reflect. Background consolidation creates observations — evidence-grounded beliefs with proof counts, freshness trends, and contradiction reconciliation — without operator-defined patterns. Mental models are user-defined topics that auto-refresh as new facts arrive. Multi-strategy retrieval (TEMPR) runs semantic + BM25 + graph traversal + temporal reasoning in parallel, fused via RRF and reranked with a cross-encoder. Available as managed cloud or self-hosted via Docker, Helm, or bare-metal pip install.

Mem0

Mem0 is the most established commercial agent memory layer, with broad SDK language support (Python, Node, Go) and a fully managed cloud service. Server-side LLM extraction creates facts and preferences from conversations automatically. Dual memory scope (user-level and session-level). Single-strategy semantic retrieval with metadata filtering. Self-hosting exists but is less polished than the cloud experience.

Zep

Zep is a long-term memory store designed specifically for conversational AI applications. Its distinctive feature is a temporal knowledge graph — every fact is timestamped so the agent knows when something was true. Strong on session management and user-level scoping. Cloud-managed; self-hosting is via Graphiti (the underlying graph engine), which is more involved.

Quick Comparison Table

| Dimension | GBrain | Hindsight | Mem0 | Zep |

|---|---|---|---|---|

| Created by | Garry Tan (YC CEO) | Vectorize | Mem0 Inc. | Zep |

| Released | Apr 2026 | Dec 2025 | 2023 | 2023 |

| License | MIT | MIT | OSS + paid cloud | OSS + paid cloud |

| Source of truth | Markdown in git | Structured store | Cloud DB | Temporal KG |

| Auto-learning | Operator-authored skills on cron | Observations + mental models (auto) | Fact extraction | Fact + temporal extraction |

| Retrieval | Hybrid (HNSW + tsvector + RRF) | Multi-strategy (semantic + BM25 + graph + temporal) + reranker | Semantic + metadata | Semantic + temporal graph |

| Multi-hop graph at retrieval | Has edges, not primary | Yes | No | Yes |

| Temporal reasoning | No | Yes (native) | No | Yes (graph-scoped) |

| Cross-encoder reranking | Backlink boost | Yes | No | Basic |

| Managed cloud | No | Yes | Yes | Yes |

| Self-host | Yes (PGLite or Postgres) | Yes (Docker, Helm, pip on Linux/macOS/Windows) | Limited | Via Graphiti |

| Multi-tenant by design | No | Yes | Yes | Yes |

| Model Context Protocol (MCP) server | Native + OAuth 2.1 (gbrain serve --http) | Native + OAuth 2.1 (cloud) | SDK | SDK |

| Official integrations | OpenClaw + Hermes (skill packs) | 25+ across coding tools, frameworks, orchestration, gateways | Major frameworks via SDK | Major frameworks via SDK |

| Benchmark highlight | BrainBench: P@5 49.1%, R@5 97.9% (240-page corpus) | BEAM 10M tokens: 64.1% (SOTA) | LongMemEval: varies by methodology | LongMemEval: solid conversational results |

| GitHub stars | ~14,000 | ~12,800 | High | Moderate |

Architecture: Markdown-First vs Structured-First vs Conversational-First

The four systems differ most sharply on what they treat as the source of truth and where automation lives.

GBrain treats human-readable markdown as primary. The agent reads, writes, and reasons against .md files in a git repo. The Postgres index serves queries; the markdown is what you keep, edit, and version-control. Automation runs operator-authored skills on cron — the signal-detector skill captures entity mentions on every message, enrich updates person and company pages, maintain audits citations and detects stale pages. The intelligence lives in markdown skill files the operator owns.

Hindsight treats the structured store as primary. Memories enter via retain, get extracted into facts, get consolidated into observations in the background, and feed mental models the operator defines as named topics. Automation is generative — the system synthesizes new structure (observations) from raw facts without operator-authored patterns. When new evidence contradicts an existing observation, the engine reconciles the contradiction by capturing the journey ("User was previously a React enthusiast, has since switched to Vue") rather than overwriting silently. The structured graph is the system of record; markdown isn't part of the design.

Mem0 treats the cloud DB as primary. Memories are extracted server-side by an LLM and stored as facts and preferences with user-scoped and session-scoped metadata. Single-strategy retrieval — embed the query, find nearest neighbors, return. Simpler than the others, with the trade-off that complex queries (multi-hop, time-aware) don't have specialized retrieval paths.

Zep treats the temporal knowledge graph as primary. Conversations get extracted into entities and timestamped facts. Every fact knows when it was true, which supports queries like "what did we discuss in March that we don't talk about anymore?" The architecture is purpose-built for conversational AI where temporal context shifts matter.

Retrieval

The single most important section of any agent memory comparison.

GBrain: Hybrid Search with Multi-Query Expansion

A query into GBrain follows: optional Claude Haiku query expansion (2 alternative phrasings) → vector search (HNSW cosine over pgvector) → keyword search (Postgres tsvector, parallel) → RRF merge with score = Σ(1/(60 + rank)) → 4-layer dedup → backlink-boosted ranking. GBrain's published BrainBench numbers report P@5 49.1% and R@5 97.9% on a 240-page Opus-generated corpus, beating the same system with the graph layer disabled by +31.4 points P@5. The graph contributes more lift than hybrid search alone — but the graph is used for ranking, not multi-hop traversal at query time.

Hindsight: TEMPR (Four Parallel Strategies)

Hindsight's retriever runs four strategies on every query: semantic search (vector similarity), BM25 keyword, graph traversal (multi-hop across typed entity edges), and temporal reasoning (native handling of time-based queries). Results fuse via RRF and re-score through a cross-encoder reranker. Hindsight currently holds SOTA on the BEAM 10M-token benchmark at 64.1% — 58% ahead of the next-best system. The graph traversal and temporal layers are the two strategies neither GBrain nor Mem0 ship as primary.

Mem0: Semantic + Metadata

Mem0's retrieval is straightforward — embed the query, find nearest vectors, filter by metadata (user ID, session ID, tags). No keyword search, no graph traversal, no temporal reasoning, no reranker. This is fine at small scale and for queries that look like the embedded text. It struggles when queries require precision (specific term match) or compositional reasoning (multi-entity, time-aware).

Zep: Semantic + Temporal Graph

Zep combines semantic search with the temporal knowledge graph. Queries can scope to time ranges and follow entity relationships through the graph. Stronger than Mem0 on conversational time-shift queries, weaker than Hindsight on broader retrieval (no BM25 keyword, no general-purpose multi-strategy fusion).

Integration Breadth

This is where the practical experience of adopting each system diverges sharply.

GBrain ships first-class skill packs for OpenClaw and Hermes Agent. Everything else integrates through the MCP server you stand up yourself (gbrain serve for stdio, gbrain serve --http for OAuth 2.1). No first-party packages for Claude Code, Cursor, CrewAI, LangGraph, n8n, or other tools.

Hindsight ships 25+ official integrations across every major category at hindsight.vectorize.io/sdks/integrations/<name>:

- Coding tools and IDEs: Claude Code, Cursor / Windsurf (chat), Codex CLI, OpenCode, OpenClaw, NemoClaw

- Personal agents: Hermes Agent (

hermes memory setup → hindsightis a native provider), ChatGPT, Perplexity - Agent frameworks: CrewAI, LangGraph, LlamaIndex, AutoGen, Agno, Pydantic AI, SmolAgents, Strands, Vercel AI SDK, OpenAI Agents SDK

- Orchestration: n8n, Dify, Pipecat

- LLM gateways: LiteLLM (100+ providers transparently)

Mem0 ships SDKs for Python, Node, and Go, with documented integrations for major frameworks (LangChain, CrewAI, LlamaIndex). Less category breadth than Hindsight, similar to Zep.

Zep ships SDKs for Python and TypeScript, with documented integrations for the major frameworks. Heavily oriented toward conversational AI use cases rather than coding agents or orchestration.

For teams whose stack is Claude Code, Cursor, CrewAI, n8n, Pipecat, or anything else outside the four systems' native targets, Hindsight is the only one with a first-class integration. The other three require the operator to wire it up.

Self-Host vs Managed Cloud

| GBrain | Hindsight | Mem0 | Zep | |

|---|---|---|---|---|

| Managed cloud | No | Yes | Yes (primary) | Yes (primary) |

| Self-host: Docker | docker-compose for tests | Yes (full and slim variants) | Limited | Via Graphiti |

| Self-host: Kubernetes | Manual | Helm chart | Limited | Via Graphiti |

| Self-host: Bare metal | bun install && bun link | pip install on Linux/macOS/Windows | Limited | Via Graphiti |

| Zero-config local mode | PGLite (WASM Postgres) | Embedded pg0 Postgres | Local SQLite for dev | Limited |

| Vector backend | pgvector | pgvector / pgvectorscale / vchord / ScaNN | Internal | Internal graph + vector |

| Pluggable LLM provider | OpenAI required, Anthropic optional | Any (LiteLLM-compatible) | Server-side | Server-side |

Honest read: GBrain has the simplest self-host path (PGLite means no DB server) but no managed cloud at all. Hindsight has the most flexible self-host story (Docker / Helm / pip across three OSes, four vector extensions, any LLM provider) plus a managed cloud. Mem0 and Zep are cloud-first; their self-host paths exist but aren't where the polish goes.

Pricing

Numbers below reflect public pricing as of writing.

| GBrain | Hindsight | Mem0 | Zep | |

|---|---|---|---|---|

| Self-host base | Free (MIT) | Free (MIT) | Limited | Via Graphiti (AGPL/permissive split) |

| Managed cloud free tier | N/A | Yes | Yes (10K memories) | Limited |

| Managed cloud paid plans | N/A | Usage-based | Hobby $19, Growth $79, Pro $249 | Per-seat / usage-based |

| LLM cost | Operator pays (OpenAI required) | Operator pays (any provider) | Included in cloud | Included in cloud |

| Storage cost | Operator pays (PGLite free, Postgres your choice) | Operator pays (self-host) or included (cloud) | Included | Included |

| Enterprise / custom plan | N/A | Yes | Yes (custom) | Yes |

In practice: GBrain self-host is single-digit dollars per month for an active personal brain. Hindsight self-host is similar; Hindsight Cloud has a free tier plus usage-based paid plans (see Vectorize pricing). Mem0 and Zep cloud plans scale steeply with usage and number of users.

Use Case Fit

| If you want… | Pick |

|---|---|

| A markdown-first knowledge base you author skills for and own end-to-end | GBrain |

| To run OpenClaw or Hermes Agent with operator-authored patterns | GBrain |

| Memory infrastructure for an agent product serving end users | Hindsight |

| Drop-in integration with Claude Code, Cursor, CrewAI, LangGraph, n8n, etc. | Hindsight |

| Beliefs that auto-reconcile contradictions across thousands of memories | Hindsight |

| Multi-hop graph traversal AND temporal reasoning at retrieval | Hindsight |

| SOTA-grade long-horizon retention without authoring schema or skills | Hindsight |

| A managed memory layer with one-click OAuth 2.1 MCP onboarding | Hindsight (cloud) |

| The most established commercial managed service with broad SDK language coverage | Mem0 |

| Conversational AI where temporal-graph context shifts matter most | Zep |

| Enterprise compliance posture for conversational user-scoped memory | Zep |

Decision Framework

The honest framing of the four systems:

- GBrain is a personal brain for one operator. It rewards the operator who wants to author skill workflows and own markdown source-of-truth. It is structurally a different product class from Mem0 / Zep / Hindsight, which are agent memory platforms.

- Hindsight is agent memory infrastructure. It rewards teams that want memory the agent calls (

retain,recall,reflect) without operator-authored patterns, with 25+ integrations across the categories agents actually run in. - Mem0 is the established commercial managed service. It rewards teams that want broad SDK coverage and the lowest operational ceiling, accepting weaker retrieval (single-strategy) as the trade-off.

- Zep is conversational-first temporal memory. It rewards teams whose primary axis is "what did we discuss when," accepting narrower scope as the trade-off.

If you're shopping in this category, the question to start with isn't "which has the best benchmark" — it's "which audience am I." For most teams looking at GBrain after the YC-CEO launch buzz, the honest answer is that you wanted memory infrastructure rather than a personal brain, in which case Hindsight is the closer fit.

For deeper head-to-head: see GBrain vs Hindsight, Hindsight vs Mem0, and Hindsight vs Zep.

Frequently Asked Questions

Which one has the best benchmark scores? Different systems publish different benchmarks, which makes apples-to-apples hard. Hindsight holds SOTA on BEAM at 10M tokens (64.1%, with the next-best at 40.6%). GBrain's published BrainBench numbers (P@5 49.1%, R@5 97.9% on a 240-page corpus) are internal evaluations not directly comparable to academic benchmarks. Mem0 and Zep have published LongMemEval results that vary by methodology (model used, retrieval mode, top-k). The most defensible read: Hindsight is the only one with SOTA on a long-horizon benchmark.

Can I use GBrain with Mem0 or Hindsight? GBrain is structurally a different product (markdown brain, operator-authored skills), so they don't directly conflict. Some teams use GBrain as a personal markdown brain on top of an agent that also uses Hindsight or Mem0 as its production memory layer. Different layers, different jobs.

Is the YC-CEO factor inflating GBrain? A bit, yes. GBrain has more visibility than its young version number would normally command. The architecture is genuinely thoughtful and the BrainBench numbers are honest, but the brand halo is doing some of the work. Strip the YC-CEO branding and GBrain would still be one of the better personal-brain projects in the category; with it, it's also benefiting from outsized attention.

What about Letta, Cognee, SuperMemory, MemPalace? Out of scope for this four-way, but covered in GBrain alternatives (Letta, Cognee, SuperMemory) and MemPalace alternatives (MemPalace). The four-way here covers the systems most likely to come up when an evaluator is comparing GBrain against established production memory layers.

Are any of these vendor-lock-in risky? GBrain and Hindsight are both MIT-licensed with full self-host paths, so vendor lock-in is minimal — your data is in Postgres and (for GBrain) markdown files you own. Mem0 and Zep cloud are the typical SaaS lock-in story; data is in their cloud, governed by their export tooling. Self-host options for both exist but are less polished than the cloud paths.

Further Reading

- What is agent memory? — Foundational concepts behind persistent AI memory

- Best AI agent memory systems compared — Full landscape of agent memory frameworks

- What is GBrain? — Foundational explainer for GBrain

- GBrain vs Hindsight: AI Agent Memory Compared — Two-way head-to-head

- GBrain alternatives — Five best agent memory systems if GBrain isn't the right fit

- GBrain Review: Honest Assessment — Strengths, weaknesses, and verdict

- Hindsight vs Mem0 — How Hindsight compares to Mem0

- Hindsight vs Zep — How Hindsight compares to Zep

- Agent memory vs RAG — How agent memory differs from retrieval-augmented generation

GBrain, Hindsight, Mem0, and Zep all solve real problems for real audiences. The mistake is assuming one of them is "the best" memory system — they're optimized for different operators, different stacks, and different definitions of memory. Pick by which audience you actually fit. If you started here because you saw the GBrain launch and wondered what production memory infrastructure looks like, evaluate Hindsight — observations and mental models maintained automatically across 25+ integrations is the closest production answer to what GBrain does for personal brains.